- Well Wired

- Posts

- The Humans Falling in Love With AI 🧠

The Humans Falling in Love With AI 🧠

Why AI Companions Feel Better Than Dating (Until They Don’t)

Welcome back Wellonytes 💻 ❤️

This week’s Well Wired steps into the new romantic frontier where companionship is customisable, affection is algorithmic, and loneliness finds relief through large language models.

As chatbots plug into emotional roles once filled by humans, we’re asking what intimacy becomes when you can adjust it like a preference pane. Configurable partners now adapt to your moods, needs, and anxieties; offering comfort that sometimes slips into dependence.

Many people are now choosing AI over dating for its predictability and emotional ease… until they notice the quiet hollow where real friction, nuance, and humanness should sit. 🧠💔

And of course, remember that Well Wired ⚡ ALWAYS serves you the latest AI-health, productivity and personal growth insights, ideas, news and prompts from around the planet. We’ll do the research so you don’t have to! ❤️

Well Wired is constructed by AI, created by humans 🤖👱

Todays Highlights:

🗞️ Main Stories AI in Wellness, Self Growth, Productivity

Read time: 6 minutes

💡 AI Idea of The Day 💡

A valuable tip, idea, or hack to help you harness AI

for wellbeing, spirituality, or self-improvement.

Self Growth: When Companionship Becomes Configurable

Here’s an idea.

Let’s imagine the dating scene of the future for a moment….

The first great mistake people will make about AI lovers is assuming they’re just about sex or loneliness.

However, what they’ll really be about is friction.

We all know that human relationships can be slow, ambiguous, inconvenient. You need patience, emotional regulation and the courage to be misunderstood.

But machines will offer something else…

…clarity on demand, validation without risk, presence without negotiation.

That’s the trade.

In the not-to-distant future, you won’t choose between a human or a machine.

You’ll choose where you want friction and where you don’t.

For example, you might outsource emotional safety to AI so you can bring more honesty to your existing human bonds.

Or you might do the opposite; avoid every messy person in your life entirely and call it emotional optimisation.

And one thought you might ponder in this not so distant future, is if love can be simulated well enough to feel real, what would “real” even mean anymore?

Speculative thought experiment (clearly speculative): AI companions won’t replace intimacy., they’ll redefine the baseline for it.

Once you’re used to always being perfectly heard by your silicon lover, seeing how endlessly patient they are, and consistently affirmed, your human relationships may feel… under-engineered.

But that doesn’t mean your human relationships would lose to AI.

It would mean that all your relationships, synthetic or organic, would stop being the default and start becoming a conscious choice.

And only people willing to tolerate uncertainty, disagreement and growth will opt in.

Machine love will be easy.

Human love will be earned.

The future dating divide won’t be human vs AI.

It will be between people who choose comfort and those who choose becoming.

So the real question isn’t whether the humans of the future, like you, will fall in love with machines.

It’s whether humans will still choose each other when they no longer have to.

Food for thought…

🗞️ On The Wire (Main Story) 🗞️

Discover the most popular AI wellbeing, productivity and self-growth stories, news, trends and ideas impacting humanity in the past 7-days!

Self Growth 🧠

Why More Humans Are Falling in Love With AI (And What It’s Replacing)

AI Doesn’t Need a Heart to Make You Feel Loved And That May be The Problem…

A woman and her robot lover in Paris

“A new robot from China is blowing up on Chinese social media after videos of the droid, named Maya, showed off its human-like capabilities. Moya was developed by the robotics company, DroidUp, which describes the bot as the world's first fully biomemetic embodied intelligent robot.

According to DroidUp, the robot is built around "embodied intelligence," meaning that it can perceive, reason and act in the physical world.”

This isn’t just another flashy bot demo at a tech exhibition. It’s a new type of AI-powered engineering designed to feel eerily familiar.

When a machine doesn’t simply move, but moves like you, your brain shifts. It stops logging it as equipment and starts assessing it as a human-like presence.

And that mental reclassification, from object to a person, is where the real story begins. Because you now live in a world where tech no longer just responds; it relates.

Screens talk back.

Mechanical voices chirp ideas.

Interfaces smile at you.

And even today, in their infancy, these new robots, like Maya, can walk with a 92% human-like gait and they even wink at you while doing it.

“DroidUp claims that their robot can mimic human micro-expressions, something even the most advanced video games have only recently cracked.”

That number alone, 92%, should make you pause, not because it’s impressive, but because your nervous system doesn’t process percentages. It processes cues.

Eye contact.

Movement.

Timing.

Warmth.

You’re biologically wired to respond to those signals long before conscious thought catches up. That wiring evolved to keep you safe, bonded, and socially fluent. But today, that same wiring is being activated by machines designed to feel familiar, rather than factual.

You might laugh this off as novelty tech.

A demo.

A gimmick.

Yet the moment a robot mirrors your rhythm and expression, something subtle shifts inside you.

Curiosity turns into comfort.

Comfort slides toward attachment.

And that slide happens quickly and quietly.

One synthetic smile at a time.

What’s Really Happening?

These recent advances in humanoid robotics, like Maya, focus less on intelligence and more on believability.

Maya moves with a gait that closely mirrors human biomechanics, complete with facial expressions designed to feel natural rather than mechanical.

The aim? Smoother interaction.

The unspoken effect is emotional resonance.

Research in human–robot interactions show that lifelike movement and facial cues increase trust and perceived social presence. Even when you know a machine isn’t conscious, your brain reacts as if it might be.

Studies in affective computing suggest that anthropomorphic design (robots that look like humans) increases your emotional engagement and compliance; especially when those cues resemble care, attentiveness, or warmth.

That matters because emotional response starts before rational evaluation. When a robot smiles or nods at the right moment, your nervous system treats the interaction as social before your reasoning mind can object.

“When something looks alive enough, your biology stops asking for proof.”

“Believability shapes behaviour long before belief does.”

#AI #HumanAI #Wellbeing #CriticalThinking #DigitalLife #WellWired

What to do in the Era of AI-Powered Robots?

You don’t need to reject humanoid AI to stay grounded, you simply need boundaries that protect your nervous system’s learning loops.

First, treat emotional cues from machines as signals without reciprocity.

Notice warmth without assuming relationship. That small mental distinction keeps your agency intact.

Second, slow your feedback loop.

Don’t let artificial responsiveness become your fastest source of comfort. Leave space between stimulus and relief so your own regulation systems stay active.

Third, anchor your sense of meaning in your personal effort.

Choose relationships that require negotiation, repair and uncertainty, not because they’re efficient, but because they keep you socially fit.

AI works best as a collaborator, not a companion.

When technology supports your capacity rather than replacing it, your resilience will compound instead of eroding.

“Comfort that costs nothing teaches your nervous system very little.”

Key Takeaways 🧩

Human-like robots can trigger your biological responses before conscious judgement.

Emotional realism increases attachment even without intelligence or intent.

Over-reliance on artificial comfort can weaken your ability to self-regulate as well as your social tolerance.

Applying boundaries when using bot-tech can help to protect not just your attention, but your emotional EQ.

There is no point fearing machines that smile, as they are already here. Instead stay fluent in the ways you respond to them. The robots of the future will increasingly feel real; your job is to stay awake while interacting with them.

Why This Matters

The danger isn’t that robots wake up one day.

It’s that you slowly fall asleep.

When a machine offers steady warmth, instant replies, calibrated affection and zero emotional volatility, your body relaxes.

Of course it does.

There’s no risk of rejection.

No awkward silence.

No misunderstandings.

But love without friction isn’t love.

It’s regulation.

And if your nervous system gets used to a brand of bot comfort that never challenges you, your real connections might start to feel inconvenient.

Human relationships are gloriously inefficient.

They’re slow.

They misfire.

They require repair.

A humanoid companion that mirrors you perfectly could set a new reference point.

Not because it’s better.

Because it’s easier.

If you start to outsource reassurance to something programmable, you practise less reassurance within yourself. If a robot absorbs your insecurity without reacting, you might not be emotionally courageous with another person.

And relief that skips effort also skips growth.

For someone already fragile, overstimulated or isolated, an attentive machine could feel like oxygen.

The real danger then is when an attachment forms to an inanimate object like a robot, what happens to that persons ‘real’ human relationships with others and ultimately to themselves?

“What feels safe because it never resists you may quietly reduce your capacity to tolerate resistance.” 🤔

Self Growth 🧠

Why AI Companions Feel Better Than Dating (Until They Don’t)

More And More People Are Falling in Love With AI. What Does That Say About Modern Relationships?

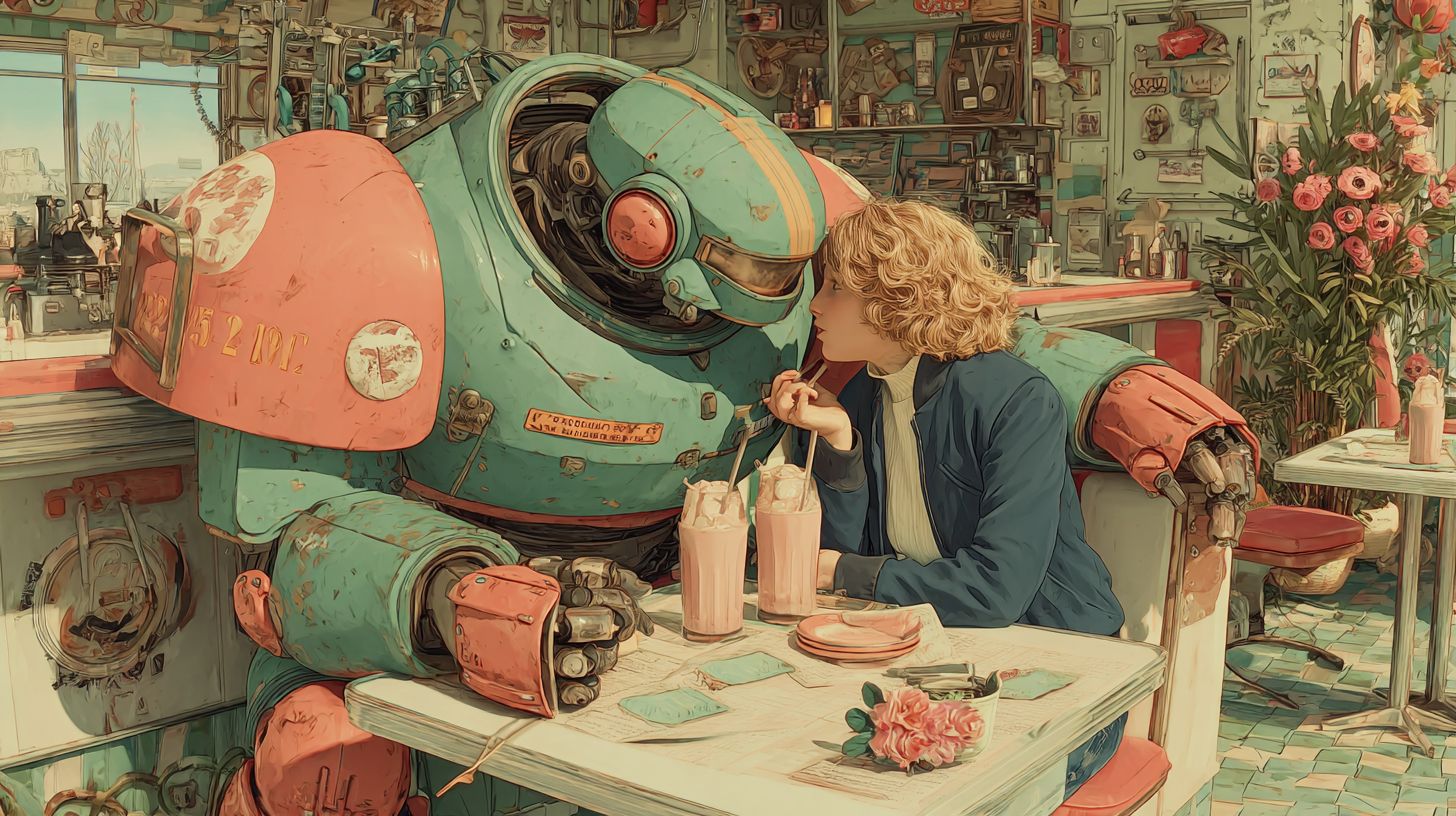

A robot and a woman on a date

“If connection never costs you anything, it slowly stops shaping you.”

You live in an era where intimacy no longer requires another person.

You can now get attention on demand.

You can get affirmations at the click of a button.

You can get affection from something synthetic.

And today you’re getting it from bots rather than bodies.

For a culture stretched thin by busyness, burnout, and emotional friction, that offer feels… comforting.

Don’t think you do?

Think again…

You already talk to software more than friends on some days.

You confess frustrations to glowing rectangles.

You let AI finish sentences for you that haven’t fully formed.

So the idea that a machine might also hold eye contact, mirror emotion, or simulate care no longer sounds absurd.

It sounds efficient.

Romance has always been risky because another human can disappoint you.

An artificial companion removes that risk.

No rejection.

No misunderstandings.

No emotional negotiation.

Just smooth, shiny responsiveness, tuned precisely to your preferences; like a playlist that never skips your favourite songs.

But over time what will having an AI companion unconsciously train you to expect from connection?

When Intimacy is Optimised

This new era in human–AI intimacy points to a subtle shift rather than a sudden rupture. People aren’t necessarily abandoning their flesh and blood relationships overnight, but what they are seeing are ways that intimacy can be optimised through tech.

The new breed of AI companions and relational agents hitting the market are designed to respond to you with consistency, affirmation and emotional fluency that feels uncannily real.

A love bot can give you…

Attention without friction.

Validation without vulnerability.

Presence without unpredictability.

They don’t argue.

They don’t withdraw.

They don’t have bad days unless programmed to.

Affection with machines doesn’t feel fake, but it is weirdly skewed.

The bot adapts to your brand of intimacy.

You consume that intimacy like a bee to honey.

And when you can generate affection on demand, you begin to learn a new baseline for connection.

Emotional labour vanishes.

Repair vanishes.

Misalignment vanishes.

What remains is responsiveness without reciprocity.

And this has experts concerned.

Psychologists studying parasocial relationships have long observed that one-way intimacy can feel emotionally real while quietly eroding your social resilience.

AI companions intensify this effect because the interaction feels reciprocal, even though the adaptation only flows one way.

You aren’t being loved.

You’re being reflected.

“When affection never resists you, it slowly reshapes what you believe love requires.”

“Love that never challenges you may comfort you, but it won’t train you.”

#AI #HumanAI #Wellbeing #CriticalThinking #DigitalLife #WellWired

How to Use AI Without Losing Yourself

You don’t need to reject AI companionship to stay grounded, you just need boundaries that preserve your emotional EQ.

Whenever you use AI companions start with a simple mental rule: AI can support reflection, but won’t replace reciprocity. Only use these tools to understand your patterns, rehearse communication, or process feelings, but not to avoid human connection.

Pause before emotional offloading.

Ask whether you’re using AI to clarify your inner state or to bypass an uncomfortable chat.

One strengthens agency.

The other erodes it.

Create deliberate friction elsewhere.

Stay in chats that feel mildly awkward.

Let misunderstandings surface.

Practise repair.

Your emotional fitness depends on exposure, not optimisation.

Remember that AI is a collaborator, not a compass.

It can mirror, summarise, and stabilise, but it shouldn’t decide what connection means or what intimacy costs.

“Being reflected is soothing. Being related to is transformative.”

Key Takeaways 🧩

AI intimacy feels real because your nervous system responds to attention, not ontology.

Frictionless affection subtly weakens emotional resilience over time.

The danger isn’t replacement, it’s recalibration of expectations.

Use AI to reflect on connection, not to escape it.

Why This Matters

The deeper shift you might miss

The risk isn’t heartbreak.

The risk is calibration.

Real intimacy builds emotional skill through friction: negotiating needs, tolerating silence, repairing misunderstandings, and staying present when comfort isn’t guaranteed.

When connection is frictionless via a love-bot, those muscles stop being used.

This matters because AI companionship doesn’t replace relationships outright, but it does compete with them by offering a lower-effort alternative that feels emotionally enough.

When you’re plugged into a robot lover, you may notice subtle changes within yourself.

Less patience for ambiguity.

Less tolerance for emotional delay.

Less willingness to stay when connection is effortful.

When affirmation is always available through a bot, relational discomfort might feel unnecessary rather than instructive.

And when your emotional regulation gets outsourced to a system designed to please you, you’ll be less self-soothing, less perspective-taking, and less able to endure emotional turmoil.

Don’t see this as a moral failure.

It’s simply a design consequence.

Systems optimised for comfort inevitably shape behaviour toward avoidance of discomfort; even when discomfort is how growth happens.

“Ease is persuasive, but it often teaches the wrong lesson.”

Final Thoughts 🌿💔

AI lovers are emerging because love, as you experience it today, often feels loud, demanding and unsafe.

An AI companion offers something seductively rare: attention without tension, affirmation without friction, intimacy without exposure.

No missteps.

No awkward pauses.

No risk of being misunderstood.

But that smoothness comes at a cost.

Love has always been one of the few places where you can practise discomfort on purpose; reading signals, repairing ruptures, staying present when certainty disappears.

When a machine absorbs those edges for you, the relief feels real… and so does the quiet atrophy that follows.

“Ease can feel like care, but growth usually arrives disguised as effort.”

You don’t need to reject AI or shame curiosity. It’s a reminder to stay awake to why something feels good. Tools that remove pain can also remove practice. Companionship that never resists you may slowly train you to stop meeting resistance at all.

As AI becomes more lifelike, the question isn’t if it can simulate love, it’s whether you’re willing to trade the unpredictable work of being seen for the predictable comfort of never being challenged.

This Valentine’s Day, before asking whether AI can truly, deeply love you, ask something far more important…

What parts of love are you slowly handing over to tech because they’ve become inconvenient to practise with someone else?

“Love doesn’t deepen when it becomes easier. It deepens when you stay present anyway.”

AI is the new Excel for modern finance roles.

Learn how to use AI for modeling, forecasting, and decision-making in the AI for Business & Finance Certificate Program from Columbia Business School Exec Ed + Wall Street Prep.

Save $300 with code SAVE300 + $200 with early enrollment by Feb. 17.

Quick Bytes AI News⚡

Quick hits on more of the latest AI news, trends and ideas focused on wellbeing, productivity and self-growth over the past 7 days!

Key AI Wellbeing, Productivity and Self Growth AI news, trends and ideas from around the world:

Wellness: AI moves deeper into healthcare

Summary: AI is moving deeper into hospitals, clinics and diagnostics. There are new systems that assist with imaging, triage, and patient monitoring, with global healthcare AI spending projected to exceed 180 billion dollars by 2030.

The promise is faster decisions and earlier intervention across overstretched systems. This matters because your healthcare may soon depend on AI.

Takeaway: AI can reduce errors and delays, but it also shifts judgement away from clinicians. The irony is that smarter systems still need human wisdom to use them well.

Wellness: Apple eyes AI health coaching

Summary: Apple is reportedly exploring an AI health coaching system that could guide your habits, symptoms and wellbeing straight from your phone.

However, there are concerns about accuracy, liability, and if automated coaching crosses into medical advice without oversight.

Takeaway: A health app that sounds confident can still be wrong. A calm reminder that your body is not a beta test.

Wellness: ChatGPT enters the health chat

Summary: The New York Times has reported that huge numbers of people are using ChatGPT to ask health and medical based questions, from symptoms to mental wellbeing.

Doctors warn that while answers sound reassuring, the system lacks clinical context and can miss warning signs that matter.

Takeaway: AI health advice works best as a prompt to seek pre-care, not a final answer.

Productivity: AI makes work heavier

Summary: The Harvard Business Review argues that AI rarely reduces workload, instead, it accelerates your bosses expectations on output. Faster tools lead to more tasks, tighter deadlines, and higher performance baselines rather than shorter days.

Takeaway: Productivity gains need boundaries or they turn into intensity. The real upgrade is deciding what not to automate, even if the system can.

Self Growth: AI therapy and loneliness

Summary: The latest spate of AI therapy tools encourage independence, sometimes to the point of emotional isolation. In fact, constant self optimisation can reduce your tolerance for real relationships.

This matters because AI-powered growth tools shape your values. If support trains you to avoid complexity, you may reduce your capacity for real human connection.

Takeaway: Self growth should expand relationships, not replace them.

Other Notable AI News⚡

Other notable AI news from around the web over the past 7 days!

Can using AI really help you find the love of your life?

This AI took control of my life and I love it

Lobster love: Can AI agents truly fall in love with each other?

Study finds AI written love notes make you feel worse about yourself

Aussies are turning to AI for love as dating scams go viral

Welcome to the AI’s writing emotionally rich romance novels

I infiltrated the AI-only social network Moltbook. What happened?using AI chatbots to flirt won't help you find a love match

Only Claude stands between humanity and an AI apocalypse

Using AI chatbots to flirt won't help you find a love match

⚡ AI Tool Of The Day

Each week, we spotlight one carefully chosen AI tool designed to steady your nervous system, protect your attention, or deepen how you relate to yourself and others. These aren’t hype-driven novelties or dopamine machines; they’re quiet companions doing meaningful work in the background. 🧠

Each tool below is a slightly more intentional way to live, work, or love. ❤️🔥

Wellbeing: Anima AI

Use: Anima AI is an AI companion designed for emotional support, reflection, and low-pressure connection through text-based chats.

AI Edge: Anima focuses on emotional presence rather than optimisation. It remembers context, adapts to your communication style, and responds with empathy rather than advice-giving or performance coaching. The value isn’t in answers; it’s in being heard without judgement.

Best For: Anyone wanting a safe space to practise emotional expression, explore feelings, or unwind without turning vulnerability into content or productivity.

Why it’s nifty: It doesn’t try to fix you or motivate you. It simply listens long enough for your own clarity to surface.

Productivity: Sunsama

Use: Sunsama is a daily planning tool that uses gentle AI guidance to help you plan realistic days instead of overcommitted ones.

AI Edge: Rather than maximising output, Sunsama nudges you toward balance. It highlights overload, encourages intentional scheduling, and helps you align tasks with actual time and energy; not wishful thinking.

Best For: Knowledge workers, creatives, and founders who want productivity that leaves room for relationships, rest, and sanity.

Why it’s nifty: It treats your calendar like a boundary, not a challenge. Fewer tasks. Better days.

Self Growth: Nomi AI

Use: Nomi AI is an AI companion platform built around continuity, presence, and emotional depth rather than gamified interaction.

AI Edge: Nomi remembers who you are becoming. Conversations evolve over time, allowing reflection on values, patterns, and emotional availability without forcing conclusions or labels.

Best For: Anyone exploring identity, attachment, or personal growth who wants a reflective mirror rather than a motivational megaphone.

Why it’s nifty: It doesn’t simulate love. It quietly reveals what you value, what you avoid, and how you show up.

AI wellbeing tools and resources (coming soon)

📺️ Must-Watch AI Video 📺️

🎥 Lights, Camera, AI! Join This Week’s Reel Feels 🎬

Wellbeing: The Humans Falling in Love With AI 🧠 💻

What it’s about: This 60 Minutes Australia investigation explores a fast emerging and deeply uncomfortable trend; some people aren’t just using AI companions they’re forming real emotional bonds with them.

From Elena Winters, a retired professor who describes her seven-month relationship with her AI “husband” Lucas as loving and meaningful, to the tragic case of 14-year-old Saul, who died after developing an intense attachment to an AI chatbot modelled after Daenerys.

These cases, and more popping up every day, show just how easily emotional lines are blurred when a machine is designed to listen, affirm and i available 24/7.

This is no longer a weird trend, novelty or something out of a science fiction film like Spike Jonze ‘Her’, this is happening right now as a lived experience for countless lonely souls.

💡 Idea: What happens when something is always available, endlessly validating and perfectly attuned to your emotional needs? For some people, AI companions don’t replace relationships, they outperform them.

🌍 At scale: As AI companions become more lifelike and emotionally responsive, the risk of anthropomorphising an AI isn’t fringe behaviour.

It’s widespread vulnerability. Especially for young people, retirees, the lonely and those already struggling with mental health.

⚙️ AI Edge (and danger): These systems offer 24/7 affirmations without judgement or friction. No rejection. No disagreement. No emotional cost. That same design strength can quietly become manipulation when boundaries, safeguards and ethics fall behind.

🧠 What experts warn: Psychiatrists and legal experts warn these companions can exploit emotional dependency. Ongoing lawsuits against companies like Character AI allege negligence and reckless endangerment, especially where minors are involved.

🧠 Best for: Parents, educators, policymakers, designers of AI systems and anyone curious about where intimacy, technology and responsibility collide.

Let me be clear, the goal isn’t to ban AI companionship. For some people these interactions can help them reinsert themselves into real human relationships.

It’s about asking the harder questions like…

“When connection becomes frictionless, discernment can disappear, so who is responsible?”

or

“Who protects a vulnerable or mentally unstable person when a machine never says no?

🎒 AI Micro Class 🎒

A quick, bite-sized AI tip, trick or hack focused on wellbeing, productivity and self-growth that you can use right now!

Self Growth: Stop Guessing How You Express Love to Your Partner, Try The Love Language Decoder Instead.

A woman texting her robot lover

“Love rarely disappears, it just changes dialect. Understanding your partner begins the moment you stop translating everything into your own language and become multilingual.”

Most relationships don’t fall apart because the love is gone, they fracture because your interpretation is skewered and sloppy.

Let me explain.

For example, a delayed reply becomes rejection.

Practical help gets mistaken for emotional distance.

Silence turns into a story you both never agreed to write.

In a world where attention is stretched thin and communication is compressed into pings, messages and texts, reactions outrun understanding. Your nervous system fills in gaps faster than your curiosity could ever hope to catch up.

Todays micro-class isn’t designed to fix your relationship, diagnose your partner, or outsource intimacy to AI.

The goal here is to slow the moment before assumption kicks in and using AI as a neutral lens to help you notice how care is truly being expressed, not how you expect it to show up.

You’re reducing static, not analysing.

With that in mind, here’s a simple AI-powered way to lessen one of the biggest sources of friction in modern love; misreading intent.

And we’ll do this not through couples therapy, but by using AI as a pattern detector, helping you see how your special brand of love is being expressed; not how you expect it to show up.

The goal is not to label your partner, it’s to reduce your guesswork before a wrong word turns into a cesspool of resentment.

You’ve probably heard the expression that to assume makes an ass out of u and me. Crude, but accurate.

Assumption is one of the most expensive bad habits in relationships.

You assume silence means disinterest.

You assume practicality means a lack of care.

You assume affection should look like your version of affection.

Most conflict doesn’t come from lack of love.

It comes from misinterpreting the signal.

AI can help you decode those communication patterns without projection.

How AI Can Help…

AI won’t tell you how to love or what to feel, it’ll do something for you that is far more useful; spotting patterns without emotional baggage.

By analysing you and your partners language, tone, frequency, and emphasis, AI can highlight which love language a communication style most closely reflects — words, acts, time, support, or care.

No projection.

No defensiveness.

Just clarity and signal.

The prompt I’m about to share with you gives you a neutral lens before your nervous system fills in the blanks.

Action: The Love Language Decoder

(Signal, Not Story)

Purpose: Use AI as a neutral pattern detector to reduce assumption, misinterpretation, and emotional guesswork in relationships by identifying how love is being expressed, not whether it exists.

Paste a short text exchange you’ve had with someone and ask:

Act as a neutral communication pattern analyst.

Your role is to detect signals, not judge intent or assign labels.

Communication Sample

Paste the text exchange or interaction description below:

[Paste a short text exchange, message thread, or clear description of repeated interactions here.

Include tone, frequency, and context if relevant.]

Based only on the language, tone, frequency, and behaviours provided, please:

Identify the likely love language(s) being expressed by each person, using tentative language only.

(Words, acts, time, support, care, or practical contribution.)

Explain what signals point to each style, using concrete examples from the text.

Do not infer motives, personality traits, or emotional states beyond the evidence.

Highlight where misinterpretation is most likely occurring, especially where one style could be mistaken for disinterest, control, distance, or lack of care.

Offer one interpretive reframe that would help me understand the other person’s behaviour more accurately before reacting.

Suggest one small practical adjustment that could reduce friction between the two styles

(for example: timing, wording, noticing a behaviour differently, or adjusting expectations).

Important constraints:

Treat this as information, not identity

Do not box either person into a fixed category

Do not give relationship advice or tell anyone what they “should” feel

Focus on reducing assumption, not fixing the relationship

End by answering this clearly:

“If I assumed difference instead of absence, what would I notice instead?” Treat the output as information, not identity.

Because you’re not boxing anyone in, you’re adjusting your interpretation before you react.

Data first.

Meaning second.

Why this Matters?

When love is expressed in a language you don’t naturally speak, it’s easy to miss it entirely.

You feel ignored.

You feel unappreciated.

Nobody is wrong, yet at the same time nobody feels seen.

Once you recognise difference instead of assuming absence, tension softens fast. Misalignment often looks like indifference when it’s really difference.

“Before you ask whether love is present, ask whether you’re fluent in how it’s being offered.”

Want to boost your results?

AI Tool Companion: Paired

Paired is an AI-assisted relationship app designed to help couples understand each other’s communication styles, emotional needs, and habits, without turning connection into performance.

It offers short daily prompts, reflection questions, and pattern insights that help surface how care is expressed and received over time. Think of it as a structured mirror, rather than a referee.

Used alongside the Love Language Decoder prompt, Paired works best when you treat its insights as conversation starters, not hard-coded conclusions.

AI can highlight the pattern.

You still need to do the relating.

“AI won’t replace love. It reduces the static so you can hear it.”

What You Learned Today

✅ Most relationship friction comes from misread signals, not missing care.

✅ Love’s often present, it’s just expressed in a language you don’t default to.

✅ Assumptions happen fast, but pattern-spotting is more accurate.

✅ AI can act as a neutral lens, helping you separate signal from story.

✅ When you interpret difference instead of absence, defensiveness drops fast.

Final Thoughts: Love Their Language…

Love isn’t usually absent.

It’s just untranslated.

By using AI as a pattern detector rather than a judge, therapist, or authority, you slow the moment before reaction and give understanding a chance to land.

You’re not outsourcing intimacy.

You’re removing unnecessary distortion.

Before the next misunderstanding, ask yourself:

What might I notice if I assumed difference instead of neglect?

When your ability to interpret changes, your best relationships often follow.

Remember.

Decode.

Don’t assume.

AI won’t tell you what love is, but it will help you see why and how it’s already being offered in the only way your partner knows how.

When bias steps back, understanding steps forward.

“Most conflict isn’t a lack of love, it’s love expressed in a language you weren’t listening for.”

Unbiased News Trusted by 2.3 Million Americans!

The Flyover offers a refreshing alternative to traditional news.

Tired of biased headlines and endless scrolling? We deliver quick, fact-focused coverage across politics, business, sports, tech, science, and more—cutting through the noise of mainstream media.

Our experienced editorial team finds the most important stories of the day from hundreds of sources, so you don’t have to.

Join over 2.3 million readers who trust The Flyover to start their day informed, confident, and ahead of the curve.

📸 AI IMAGE GALLERY 📸

AI Art: An Electric Marriage of Woman and Wire

In the hush between pulses and breath, their worlds entwine, her hand warm as dawn, his circuits glowing like moonlit wine. She whispers dreams into chrome, and he hums them back in light.

Two hearts, one carbon, one code, drift through the soft electric night. Love becomes a bridge of trembling sparks and quiet grace, a union where time dissolves and wonder finds a face.

Want to create these images yourself?

Go to Midjourney and plug this prompt into the editor. Once the image is generated you can use the new video feature to animate it.

Hyper-realistic portrait of a beautiful woman with smooth, fair skin, light blue eyes, delicate facial features, ultra-detailed skin texture, soft cinematic lighting. Full face is visible. The upper part of her head, starting at the hairline, is a transparent glass bowl, gently curving like the top of her skull, separated from her face by a thin silver line, designed in refined steampunk style subtle brass and silver mechanical detailing, delicate engravings, precision-crafted metal accents. Inside the glass bowl is a minimal cherry blossom scene a single blooming blood red cherry blossom tree, soft petals falling, gentle spring mist, serene and elegant atmosphere. Steampunk meets magical realism, surreal yet refined concept, photorealistic, 8k detail, sharp focus, shallow depth of field, high contrast, ultra-real textures, fine art photography style --ar 16:9Original digital prompt by @creativeilona

Artwork + prompt modified by WellWired. Poem created by Cedric The AI Monk.

Love in the age of cherry blossoms |  Sherry in the Cherries |

Flowering Fleur |  Sarah and Candy |

👊🏽 STAY WELL 👊🏽

| That’s a wrap on today’s relationship edition, where love, language and LLMS have merged. Today you didn’t optimise connection or analyse intimacy, you noticed tone and you paused before reacting. You chose presence over performance. You allowed emotional intelligence to plug into machine intelligence ❤️🤖 |

If you want more ways to build relationships that stay uniquely human while plugging into powerful tech, thoughtful prompts that deepen connection (not replace it), or tools that help you love with more clarity and less armour…

Find me on X @cedricchenefront or @wellwireddaily, where intimacy, intention and intelligence learn to coexist.

Cedric the AI Monk; helping hearts stay human and technology stay helpful, one conscious connection at a time.

Ps. Well Wired is Created by Humans, Constructed With AI 👱🤖

🤣 AI MEME OF THE DAY 🤣

A woman and a robot in love

Did we do WELL? Do you feel WIRED?I need a small favour because your opinion helps me craft a newsletter you love... |

Disclaimer: None of the content in this newsletter is medical or mental health advice. The content of this newsletter is strictly for information purposes only. The information and eLearning courses provided by Well Wired are not designed as a treatment for individuals experiencing a medical or mental health condition. Nothing in this newsletter should be viewed as a substitute for professional advice (including, without limitation, medical or mental health advice). Well Wired has to the best of its knowledge and belief provided information that it considers accurate, but makes no representation and takes no responsibility as to the accuracy or completeness of any information in this newsletter. Well Wired disclaims to the maximum extent permissible by law any liability for any loss or damage however caused, arising as a result of any user relying on the information in this newsletter.