- Well Wired

- Posts

- AI May Be Reshaping Your Relationship To Money And You Don’t Even Know it!

AI May Be Reshaping Your Relationship To Money And You Don’t Even Know it!

And the Woman Who is Worried That AI Is Thinking For Her Boyfriend...

Welcome back Wellonytes 💻

This week’s Well Wired explores that uneasy moment when trust in AI starts to wobble. Doctors are raising alarms, financial habits are shifting in ways no one expected, and AI health assistants are making mistakes that are far from harmless.

Something important is changing, but the real tension lies in what you haven’t heard yet.

We’re also diving into the white-collar jobs being taken by AI, the fading honeymoon phase with AI confidants, and one reader’s unsettling story: “I think AI is thinking for my boyfriend.”

The patterns forming beneath these stories hint at a bigger shift coming and it’s closer to your daily life than you might think.

And of course, remember that Well Wired ⚡ ALWAYS serves you the latest AI-health, productivity and personal growth insights, ideas, news and prompts from around the planet. We’ll do the research so you don’t have to! ❤️

Well Wired is constructed by AI, created by humans 🤖👱

Todays Highlights:

🗞️ Main Stories AI in Wellness, Self Growth, Productivity

😁 Learn & Laugh AI in Wellbeing 📚

AI Idea Of The Week: Ice Baths to Bytes: Can AI Dose Your Stress?

AI Video Of The Week: Why Conscious AI Would Be a Catastrophe

AI Tools Of The Week: Biofourmis, Adept (ACT-1), Waking Up

AI Micro-Class: The Silent Divide Between Amateurs and Pros

AI Gallery: The Technicolour Time Traveller

Read time: 6 minutes

💡 AI Idea of The Week 💡

A valuable tip, idea, or hack to help you harness AI

for wellbeing, spirituality, or self-improvement.

Wellbeing: From Ice Baths to Bytes: Can AI Dose Your Stress?

If you’ve ever studied your stress-levels with a wearable or if you’ve ever done a little ‘self’ research on the topic, you probably already know it isn’t the enemy.

But what you may not know, is that bad dosing is.

It’s called, Hormesis and it is a biological phenomenon where low doses of a stressor (like toxins, radiation, or physical stress) stimulate beneficial, adaptive, and protective responses in you, while high doses are toxic or inhibitory.

Hormesis is a bit like making a great espresso.

You need just the right dose of coffee and water, added at exactly the right time to create the perfect brew.

Too little of one ingredient?

It tastes horrible.

Too much?

You’re feeling uber jittery in the middle of the night questioning your life choices.

Your health choices are smiliar.

Cold plunges.

Fasting.

Heat exposure.

HIIT.

Breathwork.

All powerful.

All dose-dependent.

Here’s the interesting shift:

AI can help you get your health doses just right.

You can now track your HRV, sleep stages, recovery curves, inflammatory markers and performance outputs and detect when your stress load tips from adaptive to excessive.

What this means is that instead of guessing if today is “ice bath day” or “go lie down and rethink my life” day…

You can model the day to your particular brand of stress based on where you are right now.

That’s sovereign somatic training.

You see resilience isn’t about enduring more stress, it’s about applying the minimum effective dose. And now AI can help.

Modern Monk principle…

Be nervous system first.

If your HRV trends downward, resting heart rate climbs, sleep fragments and cognitive sharpness drops, your system isn’t adapting.

It’s accumulating.

Here’s your AI-powered move…

Before your next stress ritual, ask, “Based on my recent recovery signals, should I increase, maintain or reduce stress exposure today?”

Use your wearable data.

Feed it into AI.

Let it detect patterns you miss.

Not to outsource your intuition to tech, but to refine it.

Hormesis is deliberate discomfort.

Not search and scramble and hope for the best.

Resilience isn’t built by proving you’re tough.

It’s built by respecting your personal adaptation cycles.

Ice baths train you to tolerate cold.

Al can train you to tolerate you ‘stress blueprint’ reality.

The future of optimal performance isn’t to be harder skinned or more resilient.

It’s to use AI to get smarter about your stress.

Final thought:

The future of optimisation isn’t harder training.

It’s precision dosing.

AI + Awakened =

Measure.

Adjust.

Adapt.

🗞️ On The Wire (Main Story) 🗞️

Discover the most popular AI wellbeing, productivity and self-growth stories, news, trends and ideas impacting humanity in the past 7-days!

Wellbeing 🌱

Your Doctor Is Worried About AI… Should You Be Too?

A human GP freaks out about his medical robot

“The most dangerous form of intelligence is the kind that answers quickly and with absolute certainty.”

Have you ever sat up at midnight Googling about a strange chest pain or why you’ve grown a weird long brown hair on your kneecap?

Well now you can now ask any number of AI-powered health chatbots and receive a confident answer in seconds.

And you can do it anytime, 24/7.

No more sitting in waiting rooms while reading a 1993 copy of Women’s Day.

No more fluorescent lights that makes your eyes water.

No more awkward silences while a GP scrolls through your chart.

This new AI-powered shift feels empowering.

Yet at the same time, it’s also changing the way you relate to expertise, risk, and trust.

The AI healthcare revolution is already at your local clinic.

Diagnostic tools draft clinical notes.

Algorithms flag anomalies in scans.

Large language models summarise medical research faster than your GP can.

And your doctor is feeling uneasy.

Not because this wondrous tech exists, but because of how quickly you may start believing it over them.

Doctors around the globe are panicking!

Will they be out of the job?

What’s Really Happening?

AI systems are rapidly embedding themselves into clinical workflows.

Tools now assist with triage, imaging analysis, treatment suggestions and documentation.

In fact, some studies show AI can match or even exceed human diagnostic accuracy in narrow tasks like detecting certain cancers on imaging.

A 2023 review in The Lancet Digital Health found AI models performing at or above clinician level in specific radiology benchmarks.

That sounds reassuring.

Like magic!

But it’s also limited.

These systems excel in pattern recognition within defined datasets.

Your body, however, is not a dataset.

Your symptoms don’t always follow clean statistical curves.

Context matters.

History matters.

Subtle emotional cues matter.

An AI can process correlations at scale, but your doctor reads nuance.

Doctors are worried because AI-powered healthcare isn’t just about accuracy, it’s about the over-reliance of the patients and medical experts using it.

When you ask a medical AI about symptoms and the answer sounds fluent and authoritative, you start to treat these medical suggestions as conclusions without using your critical thinking.

And that shift is behavioural.

“AI-powered tools can expand a doctors capability, but they should never replace their judgement.”

“Technology can process your data. Only you can own your decisions.”

#AI #HumanAI #Wellbeing #AIDoctor #MedicalAI #WellWired

How Does This Affect You?

Whether you’re a medical professional or you simply want to harness AI to support your health journey, the idea is not to reject AI outright, it is to simply create boundaries.

Treat AI answers as drafts, not verdicts. Use them to prepare better questions for your doctor, not to replace that vital chat with AI medical jargon.

When a tool gives you a probability or recommendation, pause and ask:

What am I really assuming here?

What data might I be missing?

What context can only I provide?

How does this plug into my medical history?

Let AI accelerate your information gathering, not compress your deliberation.

Approach it as a collaborator, not a compass.

If an AI assessment contradicts your intuition or lived experience, bring that tension into the chat with your GP.

Tension sharpens clarity.

Silence dulls it.

Calm, conscious, critical thinking is important to preserve your health-focused agency.

You can benefit from technological augmentation without surrendering your judgement.

That balance is the difference between empowerment and dependency.

“Confidence in a machine grows when your confidence in yourself is untrained.”

Key Takeaways 🧩

AI in healthcare is powerful at pattern recognition and connecting the dots, not as holistic judgement.

Over-reliance subtly trains you to outsource your discernment.

Fast answers can reduce your tolerance for clarity and complexity.

Treat AI as a tool, or a teammate, to prepare for a healthcare session, not as a replacement for medical expertise.

Why This Matters

When outsourcing your medical research to AI, the risk you take is that you stop practising judgement and critical thinking.

Imagine this.

It’s 11:47pm.

You’ve got a weird pain in your chest. Not dramatic. Just enough to make your brain start whispering, “What if?”

So you grab your phone.

You type your symptoms into an AI chatbot. Ten seconds later, it replies with a clean, calm answer:

“Based on your symptoms, there is a 72% probability this is mild muscle strain.”

Seventy-two percent.

That sounds solid.

Scientific.

Almost comforting.

Your shoulders drop. You breathe easier.

But here’s the part no one tells you.

The number isn’t magic. It’s a guess trained on millions of past cases. It doesn’t know you skipped lunch. It doesn’t know you’ve been stressed all week. It doesn’t know what that pain feels like inside your body.

It knows patterns.

It doesn’t know you.

If you keep asking it every time something feels wrong, something else starts happening. You stop asking deeper questions. You stop sitting with uncertainty. You stop practising that uncomfortable skill of thinking things through.

You get used to instant answers.

And your brain slowly forgets how to wrestle with doubt.

It’s like always using a calculator for simple maths. At first it saves time. After a while, your mental arithmetic gets rusty.

The danger isn’t that AI helps you.

The danger is that you stop helping yourself.

Final Thoughts 💭

An AI can suggest what might be happening, but it can’t feel the consequences if it’s wrong.

It doesn’t live in your body.

It doesn’t wake up in the middle of the night with your heart racing.

You do.

AI is a brilliant assistant.

But the final decision about your health?

That weight still belongs to you.

“When tech removes friction, it also removes that pause where your wisdom forms.” 🤔

Self Growth 🧠

AI May Be Reshaping Your Relationship To Money And You Don’t Even Know it!

A bunch of coins falling onto a robot

“A notification can suggest a purchase, but it can’t tell you your priorities.”

You used to check your bank balance.

Now your bank checks you.

It sends nudges.

It predicts bills.

It warns you about “unusual behaviour.”

It may even suggest how much you should save, spend, or invest.

At first, that feels helpful.

Like having a tiny financial assistant in your pocket.

But something subtle is changing.

Your relationship with money is no longer just between you and your choices.

There’s a third voice in the room now.

And it speaks in notifications.

What’s Really Happening?

Imagine this.

You open your banking app and it tells you:

“Based on your spending this week, you could save $327 this month.”

“Unusual transaction detected.”

“You’re likely to run short before payday.”

It feels smart.

Predictive.

Almost protective.

Behind the scenes, AI is analysing patterns in your spending. It studies when you buy food, how often you travel, how much you tip, even how late at night you scroll and shop.

It doesn’t just record what you’ve done.

It tries to guess what you’ll do next.

In some cases, AI systems can assess credit risk, flag fraud in seconds, or automate investment decisions using massive data sets.

That’s impressive.

But here’s the quiet shift, you probably don’t yet see…

When tech starts predicting your money habits, you may start relying on it to decide what is “normal” for you.

And that slight shift can drastically change how you think.

When something else starts predicting you, you slowly stop predicting yourself.

“The more your finances run on autopilot, the less you practise steering.”

#AI #AIFinance #Wellbeing #MoneyMatters #DigitalCurrency #WellWired

This is Your AI-Powered Money Journey…

You don’t need to delete your banking app.

You just need to shift your money mindset.

When AI suggests something about your money, pause and ask:

Is this advice or is this alignment?

Does this match my long-term money goals?

Would I make this decision without the notification?

Use AI to track spending patterns, but don’t let it define your savings standards.

Treat AI like a calculator, not a compass.

It can process vast amounts of financial data, but it can’t decide your priorities for you. If you stop practising financial thinking, your judgement will dull, but if you stay on the money, your clarity sharpens.

Money isn’t just about optimisation, it’s about intention.

“AI can calculate what you can afford, but only you can decide what is worth it.”

Key Takeaways 🧩

AI can now predict and influence how you spend, save and invest.

Helpful nudges can slowly replace your judgement.

When AI says what’s “normal,” you may stop questioning your money and investment habits.

Use AI for awareness, not authority.

Why This Matters

Money isn’t just numbers.

It’s emotion.

Impulse.

Fear.

Hope.

Security.

When an app tells you that you “can afford” something, it doesn’t feel like a suggestion, it feels like permission.

When it flags something as risky, it feels like danger.

Over time, you may stop asking:

“Do I really want this?”

“Is this aligned with what I value?”

“Will this really help my life get better?”

Instead, you’ll ask:

“What does the app think?”

“How can the machine manage my money”

That’s the subtle part.

Over time you’ll practise less independent judgement and you’ll tolerate less uncertainty.

You’ll start outsourcing tiny financial decisions.

Then slightly bigger ones.

And slowly, your confidence in managing your money without prompts will weaken.

“Convenience is comforting, until it replaces competence.”

AI can calculate faster than you ever will, but it can’t decide what matters most in your life.

Only you can.

“Your AI-powered money app can analyse your behaviour, but it can’t decide what kind of life you’re building.”

Final Thoughts 💭

Your money story used to be handwritten, now it’s partly auto-filled.

AI will be part of your money narrative, whether you like it or not. The question is will you stay the main author, or simply be a character in your own rags to riches story?

“Tech can track your spending, but only you can decide your values.”

The Future of Tech. One Daily News Briefing.

AI is moving faster than any other technology cycle in history. New models. New tools. New claims. New noise.

Most people feel like they’re behind. But the people that don’t, aren’t smarter. They’re just better informed.

Forward Future is a daily news briefing for people who want clarity, not hype. In one concise newsletter each day, you’ll get the most important AI and tech developments, learn why they matter, and what they signal about what’s coming next.

We cover real product launches, model updates, policy shifts, and industry moves shaping how AI actually gets built, adopted, and regulated. Written for operators, builders, leaders, and anyone who wants to sound sharp when AI comes up in the meeting.

It takes about five minutes to read, but the edge lasts all day.

Quick Bytes AI News⚡

Quick hits on more of the latest AI news, trends and ideas focused on wellbeing, productivity and self-growth over the past 7 days!

Key AI Wellbeing, Productivity and Self Growth AI news, trends and ideas from around the world:

Wellness: Musk Says “Ask AI Your Doctor Questions.” Grok Says “Maybe Don’t.”

Summary: Elon Musk has repeatedly encouraged you to use AI for medical advice. But his own AI system, Grok, appears to caution users against relying on it for diagnosis or treatment.

The contradiction shows a growing tension in how tech leaders push AI as a fast source of answers, while the systems themselves warn about their limits. The appeal of instant medical clarity is strong, but reliability remains to be seen.

Takeaway: If a tool warns you not to trust it fully, listen. Speed is attractive. Accuracy is earned.

Wellness: When Your AI Health Assistant Gets It Wrong

Summary: As AI health assistants become more common, a difficult question arises; who is accountable when medical advice fails? Developers build the systems, clinicians integrate them, patients like you act on the answers.

But responsibility can blur. When an automated AI-powered answer leads to harm, who is accountable for the legal and ethical reamifications?

Takeaway: Automation does not remove accountability, it redistributes it. Make sure you know where you stand.

Wellness: The Silent Gaps in Medical AI Trials

Summary: A new report shows wide gaps and limitations in clinical trials for medical AI systems. Some tools are showing promise, but testing these tools often excludes the diverse populations or real-world complexity needed to test them properly.

The result is polished performance in controlled settings, with less certainty outside of them in the real world.

Takeaway: A model trained in narrow conditions may struggle in a broader reality. Context still matters more than code.

Productivity: The White-Collar Reckoning

Summary: Interactive reporting explores how AI systems are starting to reshape white-collar roles. Tasks once thought safe; drafting reports, analysing documents, generating briefs, even marketing; are slowly getting automated.

The shift won’t be a dramatic overnight replacement of millions of workers, it will be a gradual displacement of routine jobs.

Takeaway: AI is here, whether you like it or not. Thousands of tasks once only people could do are now being automated by AI. Millions will be out of work over the next decade or earlier.

What to do? Build a skill that decides, not just a skill that executes.

Productivity: How to Hack AI in 20 Minutes

Summary: A journalist managed to bypass safeguards in major AI systems in under half an hour. The experiment exposed how easily AI tools can be manipulated into producing restricted or harmful content.

AI systems are powerful, but safeguards are still far from perfect.

Takeaway: If it can be broken quickly, use it carefully. Trust needs testing, not assumption.

Self Growth: Falling Out of Love With AI Confidants

Summary: When AI companions first hit the scene, people were scrambling to use AI for friendship, coaching and digital dating, however, early adopters are now cooling off. Some users report emotional fatigue or disillusionment after extended interactions with digital denizens.

What once felt intimate is starting to feel scripted. The novelty of constant affirmation fades and real depth has proven much harder to simulate.

Takeaway: To grow emotionally you need messiness and friction. Endless validation rarely stretches you.

Self Growth: You’re Overconfident Spotting AI Faces

Summary: New research shows that we are surprisingly poor at telling real faces from AI-generated ones, yet we stay highly confident in our ability to guess. The gap between perception and accuracy is wider than you’d expect.

Takeaway: In a synthetic age where AI deepfakes are everywhere it’s becoming harder than ever to sort out what is real and what isn’t. Remember, confidence is not proof.

Self Growth: “My Boyfriend Thinks AI Is Thinking For Him”

Summary: A relationship advice column explores the growing problem of peoples dependence on chatbots and that over-reliance on AI for decisions may dull your critical thinking. When every question gets an instant answer, personal reasoning can atrophy.

One reader said, “I’m worried my boyfriend’s use of AI is affecting his ability to think for himself”. The worry isn’t about intelligence, it’s about dependence.

Takeaway: A tool that answers everything instantly can quietly weaken your ability to question. Be vigilant and set boundaries with how often you use AI.

Other Notable AI News⚡

Other notable AI news from around the web over the past 7 days!

The Most Overlooked Benefit of AI Isn’t Clinical, It’s Human

AI Execution Paralysis: Why Health Systems Are Stalling

Can AI See What Oncologists Miss? Race to Predict Ovarian Cancer

When AI Leaders Get Uncomfortable: Look at This AI Summit Moment

When You Can’t Trust Your Eyes: How Viral AI Videos Rewrite Reality

Complaints and AI Accusations: Is AI Amplifying Workplace Claims?

Is AI Intelligent? A Philosopher Says Yes, But Not the Way You Think

Is AI Really Draining the Planet? The Water Debate Gets Murkier

AI Tools Of The Week ⚡

Each week, we spotlight one carefully chosen AI tool designed to steady your nervous system, protect your attention, or deepen how you relate to yourself and others.

These aren’t loud productivity boosters or novelty bots chasing attention, they’re quiet systems working beneath the surface. 🧠 Each tool below is a more deliberate way to heal, build, or reflect.

Wellbeing: Biofourmis

Use: Biofourmis is an AI-powered remote patient monitoring platform. It analyses physiological data from your wearables to predict clinical collapse before symptoms escalate.

AI Edge: Biofourmis applies predictive analytics to continuous biometric streams, identifying subtle deviations in heart rate, respiratory rate and activity patterns that may signal risk.

Rather than waiting for a crisis, it anticipates one; allowing clinicians to intervene early and reduce hospital admissions via data-informed foresight.

Best For: Hospitals, chronic disease programmes, and healthcare systems looking to shift from reactive care to predictive care at scale.

Why it’s nifty: It moves healthcare from firefighting to foresight. Less panic, better prevention.

Productivity: Adept (ACT-1)

Use: Adept’s ACT-1 is an experimental AI agent capable of operating software interfaces like a human by observing workflows and translating natural language into on-screen actions.

AI Edge: Instead of integrating via APIs alone, ACT-1 interacts with digital tools directly through the interface layer; clicking, typing and navigating applications the way you would.

It learns patterns of use and executes multi-step tasks across programmes without needing custom integrations.

Best For: Teams exploring advanced automation, operations leads drowning in repetitive workflows and builders experimenting with next-generation AI agents.

Why it’s nifty: It doesn’t just suggest actions, it performs them, which makes the interface executable.

Self Growth: Waking Up

Use: Waking Up is a meditation and philosophy app that offers mindfulness training, contemplative theory and guided practice rooted in neuroscience and non-dualistic traditions.

AI Edge: While not fully AI-native, emerging adaptive layers personalise content suggestions based on engagement patterns, session history and behavioural rhythm; subtly guiding your practice and progression over time.

Rather than overwhelming you with choice, it refines your path.

Best For: Reflective thinkers, meditation practitioners, and anyone exploring consciousness with the help of tech.

Why it’s nifty: It treats meditation like a discipline, not a mood. Depth over novelty.

AI wellbeing tools and resources (coming soon)

📺️ Must-Watch AI Video 📺️

🎥 Lights, Camera, AI! Join This Week’s Reel Feels 🎬

Self Growth: Why Conscious AI Would Be a Catastrophe!

“If we confuse ourselves with our machines, we overestimate them… and underestimate ourselves.”—Anil Seth

What if the biggest danger in AI isn’t that it becomes conscious, but that we think it is?

This chat with Professor Anil Seth, one of the world’s leading neuroscientists and author of Being You, dismantles one of the most seductive illusions of our time; that fluent language equals inner experience.

What it’s about: Anil Seth argues that projecting consciousness onto AI systems is not just naïve, it’s dangerous. When a language model speaks confidently, we instinctively attribute feeling, intention and awareness to it.

But intelligence is not consciousness and doing is not feeling. And if we grant AI rights because we assume it’s conscious, we create a control paradox. You can’t “turn off” something you’ve morally elevated.

That’s the alignment problem nobody wants to think about...

💡 Idea: Consciousness may require life itself. Not computation, not clever code, but metabolism, embodiment and biological processes. Brains are not silicon and data, they are living systems predicting, regulating and surviving.

🌍 At scale: If we reduce ourselves to “meat computers,” we shrink what human intelligence truly is. Emotion, embodiment, perception. Octopuses see with their skin, scrub jays plan their future. Biological minds are diverse and strange. Confusing AI with consciousness flattens that richness.

⚙️ Practical edge: Next time a chatbot sounds eerily human, pause. Ask, is this system feeling anything? Or am I projecting? Fluency is not sentience.

🧠 Best for: Anyone curious about consciousness, AI ethics, human identity and the future of AI + human alignment.

“If we build machines in our image, we must first understand what the image truly is. We don’t even understand ourselves.”

🎒 AI Micro Class 🎒

A quick, bite-sized AI tip, trick or hack focused on wellbeing, productivity and self-growth that you can use right now!

Self Growth: The Silent Divide Between Amateurs and Pros

How to Harness AI to Help You Move From a Zero to a Hero in The Blink of an Eye!

An futuristic Monk in an office of the future

“Stability is a skill. Urgency is a reflex.”

When you see successful people, it’s normal to assume that the gap between amateurs and pros is some sort of god-given talent that you could never hope to attain.

It’s not.

It’s their internal mindset and attitude.

Amateurs panic under pressure.

Pro’s stabilise.

Amateurs chase breakthroughs like tequila shots.

Pro’s cultivate clarity like a garden.

So if you’ve ever reacted too quickly, over-explained yourself, or forced a solution that later felt brittle…

…this is for you.

Today you’ll learn:

✅ Why nervous system stability beats raw ambition

✅ How to use AI as a regulation tool, rather than a reaction tool

✅ The two-phase protocol that shifts you from reactive to a regulator

Because mastery is not about doing more, it’s about doing less—calmly.

The Real Divide Between Amateurs And Pro’s

The difference in being better at life, love and business isn’t skill.

It’s regulation.

Amateurs react to pressure.

Pros regulate it.

Amateurs chase breakthroughs.

Pros stabilise first.

Here’s the Modern Monk principle at work…

Nervous System First.

When your body isn’t regulated, your cognition narrows. Studies show stress shifts activity toward threat detection and short-term bias which means you optimise for relief, rather than precision.

You mistake urgency for importance.

Speed for intelligence.

Movement for progress.

That’s default, zombie-like living.

You don’t want that…

A Conscious Operator knows something different…

Clarity collapses when the body is overloaded; so before solving a problem, they stabilise the system generating the solution.

Think of it like tuning a guitar before a performance, you don’t play harder, you tune your instrument first.

Or like Formula 1.

Drivers don’t floor the accelerator on cold tyres, they warm them up first...

Pros do the same; they warm their nervous system first.

Then they move, simply, and fast!

This is Subtraction Before Addition.

You remove noise before optimising output.

Because insight doesn’t respond to panic, it responds to calm and confident spaciousness.

A young boy meditating

The Amateur Loop vs The Pro Loop

Amateur Loop:

Pressure → React → Overthink → Force solution → Short-term relief → Repeat

Pro Loop:

Pressure → Stabilise → Clarify → Act precisely → Preserve energy → Build momentum

One drains.

One compounds.

Mastery is not intensity, it is internal stillness under load.

Ambition shouts.

Mastery silences…

…then stabilises

Now let’s build that silence deliberately.

Phase One: State Stabilisation Protocol 🧠

Before you ask AI for strategy, use it for regulation as this interrupts reactive optimisation and that rarely gets you anywhere...

Purpose: Shift from noise to silent steadiness before solving anything.

The Prompt

[Start prompt]

Act as a state-regulation and clarity system.

Context: [Briefly describe the situation.]

Before offering solutions:

Identify the dominant internal pressure signal

(urgency, defensiveness, scarcity, ego threat, overload).

Name what would shift my nervous system toward regulation

(slower pacing, narrowing scope, deferring action, simplifying frame).

Answer directly:

What is the calmest effective next move available?

Constraints:

Do not optimise for speed.

Do not escalate ambition.

Reduce cognitive noise.

Prioritise stability over performance.

Keep response concise and grounded.

Then pause.

Let your physiology settle.

You are training sovereignty.

Tools are servants, not masters.[End prompt]

Phase Two: Strategic Clarity (Only After Stabilisation) 🧠

Once you’re steady, optimise.

Purpose: Apply accuracy without distortion.

The Prompt

[Start prompt]

Act as a strategic clarity engine.

Assume internal state is stable.

Context: [Brief description.]

Define the real objective (strip away ego and urgency distortions).

Identify:

The highest-leverage move

The unnecessary complexity

The variable that matters most

Recommend:

One precise action

One thing to deliberately ignore

Constraints:

Favour simplicity over expansion.

Optimise for long-term signal.

Be direct and minimal.

Regulate.

Then optimise.

Not the other way around.

That is Conscious Operator behaviour.[End prompt]

Before You Optimise, You Need to Stabilise

You live in an unusual environment engineered for acceleration.

Faster replies.

Faster output.

Faster optimisation.

And contrary to popular belief…

AI doesn’t just help you get more done.

It makes your brain move faster.

But moving faster without staying calm can make you shaky. If you use AI when you’re stressed or overwhelmed, it can amplify that stress.

If you use AI when you’re calm and steady, it helps you think more clearly and make better decisions.

That’s the real advantage.

Most apps and systems reward speed.

But real control comes from knowing when to slow down.

AI can either:

Wind you up!

Or help you steady yourself

It depends on the state you bring to it.

The difference is not in the tool.

It’s in the state of the operator.

You!

If you let AI solve from anxiety, you reinforce anxiety.

If you stabilise before you optimise, you reinforce composure.

AI will amplify whatever mental state you bring to it.

Which means the real upgrade is not artificial intelligence.

It’s regulated ‘human’ intelligence.

Technology supports.

You stabilise.

“Acceleration without regulation is just refined chaos.”

🔧 AI Tool Recommendation: Apollo Neuro

If regulation is the dividing line between amateurs and pros, then Apollo Neuro is a physical reminder of that principle.

Apollo is a wearable that gives you gentle, AI-informed vibrations (yes you feel them like a mini machine masseuse) designed to shift your nervous system state in real-time.

Calm.

Focus.

Recovery.

Energy.

Instead of trying to think your way out of stress, it works bottom-up.

When pressure hits, your physiology shifts before your thoughts do. Apollo nudges that physiology back toward safety with silent sound vibrations composed specifically for your body.

Toward steadiness.

This matters.

Because most reactive decisions don’t start with bad logic, they start with an over activated nervous system. Used intentionally, Apollo is a training partner for composure under load.

You still make the decisions, but you make them from a steadier baseline.

It doesn’t replace discipline.

It supports state.

And state determines strategy.

Tools are servants, not masters.

If your nervous system is chaotic, AI amplifies chaos.

If your nervous system is steady, AI amplifies accuracy.

Apollo helps you build the steadiness first.

🔗 5https://apolloneuro.com

What You Learned Today:

✅ The real divide between pros and amateurs is state, not talent

✅ Clarity collapses when the nervous system is dysregulated

✅ Stabilisation must precede optimisation

✅ Subtraction increases signal

✅ Mastery is steady under load

Closing Reflection

In a world engineered for reaction, regulation is rebellion.

I’m not talking about becoming calmer for comfort.

But to become a more regulated, relaxed ‘YOU’.

Choose signal over noise.

Stability over spectacle.

Precision over panic.

That’s the real path from amateur to pro.

And if you felt that difference while reading…

You’re already closer than most.

“If you optimise from panic, you compound instability.”

Tired of news that feels like noise?

Every day, 4.5 million readers turn to 1440 for their factual news fix. We sift through 100+ sources to bring you a complete summary of politics, global events, business, and culture — all in a brief 5-minute email. No spin. No slant. Just clarity.

📸 AI IMAGE GALLERY 📸

AI Art: The Technicolour Time Traveller

“He moves through centuries like a brushstroke of living paint, a technicolour wanderer woven from auroras and memory’s thread. Clocks melt gently around him, pooling into opal-tinted rivers, where past and future braid themselves into shimmering light. His footsteps hum with stories not yet born, as he slips between eras… quiet as a sigh, bright as a dream unbound.”

Want to create these images yourself?

Go to Midjourney and plug this prompt into the editor. Once the image is generated you can use the new video feature to animate it.

A photorealistic, high-angle wide shot in the style of a Wes Anderson film background of the Time Variance Authority monitoring branching timelines. A detective robot is hunting through time. The atmosphere is epic and inspiring, with vibrant details and a playful tone. The overall color scheme includes hex color #1EEDDC. --ar 16:9 --sref 8003740833 5550078511Artwork + prompt modified by WellWired. Poem created by Cedric The AI Monk.

Future Forward Frank |  Technicolour Time Traveller |

Robot Renaissance 2099 |  Dimensional Detective |

👊🏽 STAY WELL 👊🏽

| That’s a wrap on today’s Internal Climate edition, where speed slowed down and steadiness took the lead. Today you didn’t chase breakthroughs or force clarity. You stabilised. You noticed your internal weather before trying to fix the forecast. You chose regulation over reaction. You let human intelligence meet artificial intelligence 🧠⚖️ |

If you want more practices that train your nervous system before your strategy, tools that strengthen sovereignty instead of urgency, or prompts that help you think clearly without thinking faster…

Find me on @cedricchenefront or @wellwireddaily, where intelligence is amplified only after it is stabilised.

Cedric the AI Monk; stay well, stay wired!

Ps. Well Wired is Created by Humans, Constructed With AI 👱🤖

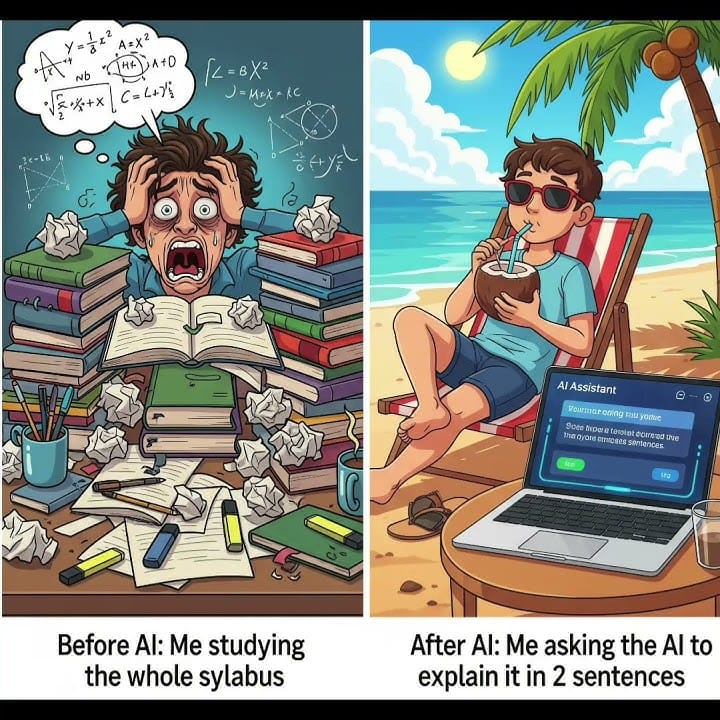

🤣 AI MEME OF THE DAY 🤣

Did we do WELL? Do you feel WIRED?I need a small favour because your opinion helps me craft a newsletter you love... |

Disclaimer: None of the content in this newsletter is medical or mental health advice. The content of this newsletter is strictly for information purposes only. The information and eLearning courses provided by Well Wired are not designed as a treatment for individuals experiencing a medical or mental health condition. Nothing in this newsletter should be viewed as a substitute for professional advice (including, without limitation, medical or mental health advice). Well Wired has to the best of its knowledge and belief provided information that it considers accurate, but makes no representation and takes no responsibility as to the accuracy or completeness of any information in this newsletter. Well Wired disclaims to the maximum extent permissible by law any liability for any loss or damage however caused, arising as a result of any user relying on the information in this newsletter.